SEEOcta Data: Big Data – How Data can Generate Revenue

Masses of data are being generated every day. Companies are therefore increasingly looking for solutions to make good use of this data, to help them define competitive advantages and identify new ways of doing business. On the one hand, this requires having the technical infrastructure to be able to analyse – and protect – big data. On the other hand, you also need a carefully considered management strategy in order to transform this big data into a profitable business model, as well as specialists who know how to make the required data available and useable

The SEEOcta blog series highlights the eight most important perspectives for successful project management. Discover all the areas you need to consider when planning digitalisation and integration projects in your company. Armed with the ideas and knowledge in the articles, you will have a solid foundation for planning your IT project and a guide to help you ensure that no one gets left behind.

What is big data?

Big data is an umbrella term for the methods and technologies that can be used to collect, store and analyse data of all kinds. This data can come from a wide variety of sources:

| Internal data sources | External data sources |

| Customer data | Social media |

| ERP module | Official statistics |

| Data from technical networks | Weather forecasts |

| Internal documents | Data sets in the public realm |

| Sensors | Geo data |

| In-house call centre | Traffic data |

| Data from transmitters, such as those in RFID transponders | |

| Audio data from call centres | |

| Images from cctv | |

| Website logs |

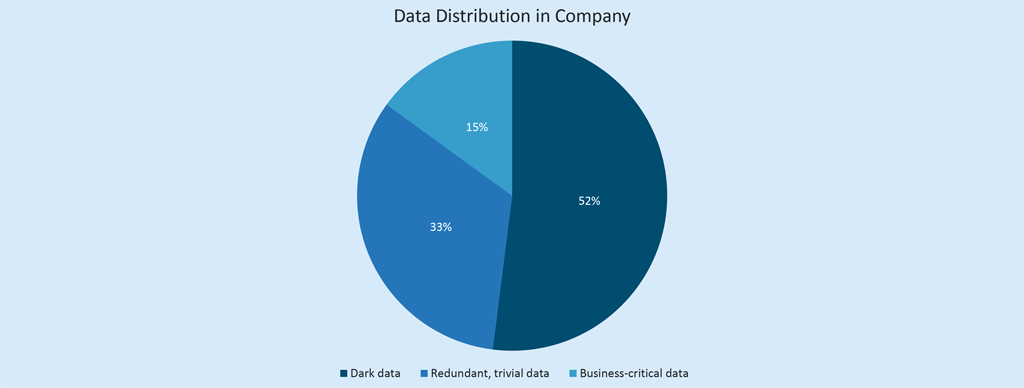

By 2025, these and many other sources are expected to have generated an estimated 163 zettabytes of data worldwide. [1] What’s particularly surprising is that, according to the Oxford Mathematics Centre, over half of the captured, stored data will never be used. This is referred to as dark data.

Good preparation is key to effectively using big data.

In order to ensure you have meaningful data – high quality, useful and trustworthy – your data first needs to be cleaned up. Basically, the value of your data – and the results derived from it – depends on three main factors:

- Its source

- Its completeness

- Its purity

In the study Preparing Clean Views of Data for Data Mining[2] by the London Guildhall University in cooperation with the Rutherford Appleton Laboratory, it is postulated that preparing data can take 60%-80% of the time planned for a data-driven project. This is because data arrives in very different forms:

- Structured (SQL, spreadsheets)

- Semi-structured (E-mail, XML, JSON)

- Unstructured (text files, image files, video files)

To start with, you therefore need an infrastructure powerful enough to aggregate all this data, whatever form it arrives in. You then need a method which analyses this data and puts it in a useable form.

Where is big data used?

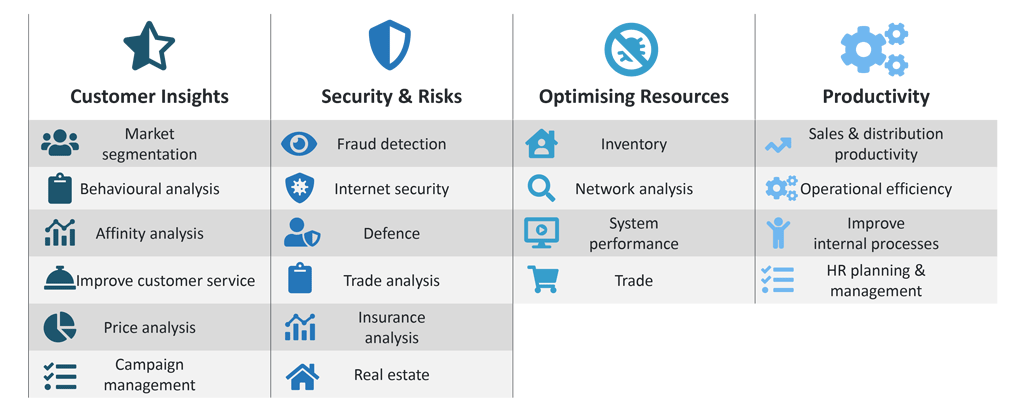

Data can be used in an unbelievable variety of ways, and as figure 2 shows, there is still a wealth of hidden potential to be exploited. Strategically combining data which at first glance seems not to be related can actually be very useful in your planning. Data from historic weather reports and historic supermarket sales data can be used to predict when there is likely to be a run on sun cream, or when you need higher stocks of mulled wine. By analysing what is being typed into online search engines, you can see how quickly an illness may be spreading as people question their symptoms or look for remedies. By analysing the continual stream of status data a machine sends when in operation, you can forecast when a part may need replacing.

In our blog post Big Data, IoT and SEEBURGER, we looked at traffic data and described how the SEEBURGER Business Integration Suite (BIS) is involved in helping this big data to flow into a data lake, converting it there into formats which make it useable for big data tools, and passing it on to the relevant systems. This enables the data to not only be used in real time traffic systems in a streaming dashboard, but also in a step beyond. Through machine learning, a component of artificial intelligence (AI), it is possible to make very reliable forecasts on the amount of traffic on the roads at certain times of the day.

In practice, there are several more areas where big data is employed:

More and more companies are trying to mine the gold in big data. By examining large amounts of data with various statistical analysis methods, it is possible to identify hidden patterns, correlations and relationships. The information held by big data can therefore give you very valuable insights for strategic decision making or on optimisation potential.

What are the benefits of big data initiatives?

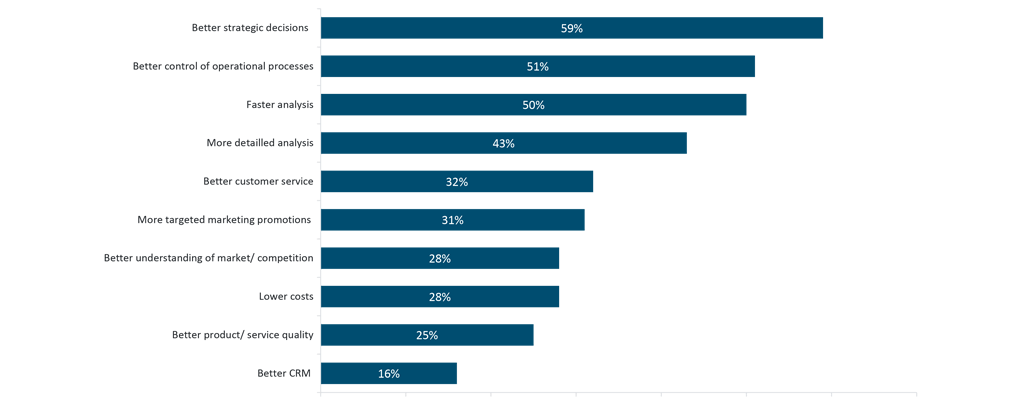

The 2015 BARC study Getting Real on Data Monetization[3] showed that many companies were already deriving considerable benefits from big data back in 2015 by analysing and incorporating large volumes of variously structured data. The information gleaned was then used as the basis for strategic decision making (59 percent), enabled more effective steering of operative processes (51 percent), helped companies understand their customers better (32 percent) and generally reduced costs (28 percent).

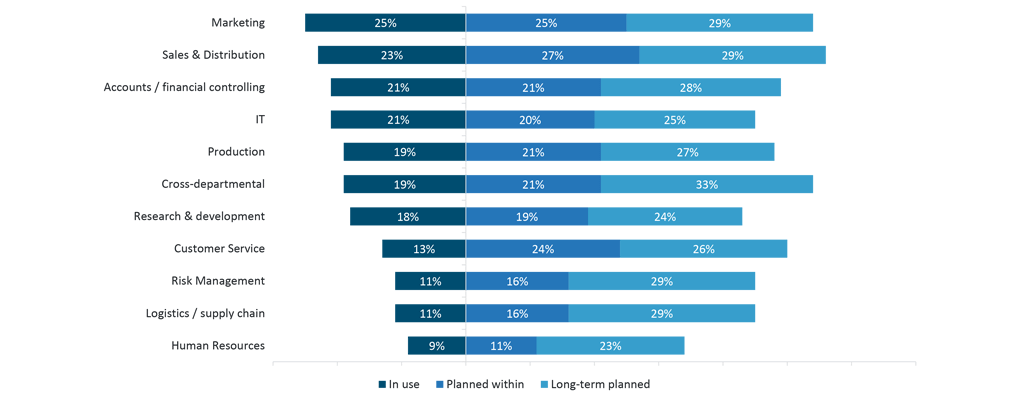

What is particularly noteworthy is that nearly all departments in a company see significant potential for big data use cases. Figure 5 shows that in 2015, within controlling/finance more than two-thirds of the companies surveyed were planning to use big data either in the future, or were doing so already. In addition to cost analysis and optimisation, companies were particularly interested in improving financial planning, budgeting and forecasting.

Customer analysis is the biggest driver of big data projects

As the BARC study shows, one of the most frequent project drivers is analysing customer behaviour in order to appeal to customers in a more targeted and individualised way. As big data can help you win new customers and identify and combat the causes of customer defection at an early stage, marketing and sales tend to be at the forefront of big data projects.

The following data tends to be used in a comprehensive customer analysis:[4]

- Demographic data on gender, age, married status, number of children etc.

- Transactional data on buying behaviour and take-up of services.

- Data on online behaviour, which shows what customers have viewed, what they have placed on their wish lists or in their shopping carts, and what they have gone on to buy.

- Data from texts written by customers such as reviews

- Information on the use of a product or service such as customer reviews on quality and functionality, the quality of service (opinions on delivery time / packaging / assembly instructions, etc.).

Both sides benefit from the customer analyses which big data enables – the seller and the customer. Retailers can adapt their product and service range to their customers’ wishes and requirements, this fulfilling expectations. The customer benefits from a more specific, personalised approach, and also gets to enjoy a higher quality of product and service.

Challenges in using big data

The huge amount of data in – the name says it all – big data, creates a number of challenges. The most important are as follows:

- What data?

In order to answer this question, you first need to know what you want to find out by analysing the data. So, what answers are you looking for? As already described, there is an almost infinite amount of data available, from internal and external sources, and this is increasing daily. This mass of unfiltered and unstructured data entering a company every day through a multitude of channels, makes deciding which data to use a Herculean task. It is therefore crucial to define what you are looking for as clearly and concretely as possible. Then, you can decide which data could be useful. You may well find that combining data from seemingly unrelated context gives you valuable results. - Data quality

The amount of structured – and particularly unstructured – data is rapidly growing. This means that data quality is a topic which is becoming increasingly important. In order to ensure that the results derived from this data are valid and useful for the company, you need to make sure that the underlying data is of a correspondingly high quality. As discussed above, this stage alone could take up 60%-80% of your project time. However, it’s so worth spending time on carefully tidying up the data. What use are wrong results which result in wrong decisions being made and ultimately damaging everyone? Garbage in, garbage out – your results can only be as good as the quality of the data they are based on. However, the approach of cancelling out errors in the data by further increasing the size of the data set used will only work if the error is causing ‘random noise’ in the results, rather than being fundamentally bad data. In practice, data errors tend not to be random, rather systematic, meaning that they won’t be cancelled out by increasing the amount of data. However, the value and competitive advantage that can be gained from thoroughly, properly analysing cleaned up, high quality data is extremely high. (See the SEEBURGER SEEOcta Data blog article on data quality). - Data silos

Data is stored in various places in an organisation, often within closed systems. We call these data silos. Unwanted data silos can spring up for a variety of technical or organisational reasons. Typically, they are the result of a lack of – or insufficient – process and data architecture within an organisation. Data silos may be created, for example, when individual departments do not store their data according to a shared corporate data governance policy, rather according to their own rules and maybe also on separate IT systems. You may therefore find that customer details are saved in separate places by sales & distribution, by the customer service department and by the bookkeepers, with no links between these separate systems. In order to carry out coherent data analysis in this sort of set-up, data sets would need to be copied, one by one, from one silo to another. This is not efficient in any sense of the word. For real-time access to all data, modern applications need to be able to switch between local, private or public clouds.

Big data, security and data protection

The more data that is used in big data initiatives, the higher the risk of data protection and security issues. In general, you need to be aware of the following issues when dealing with big data:

- Falsified, or otherwise bad data

Big data is essentially a mass of data accumulated from a variety of internal and external sources. Initially, the quality and origin of the data are irrelevant. It may therefore contain invalid, falsified or incorrect data, which would then falsify the results of any subsequent analysis. - Data protection and security

There needs to be stringent data protection measures in place to ensure that the data you have captured – especially customer details- are well protected. Measures including encryption, access control and firewalls go some way into preventing threats such as data leaks, malware or data harvesting. - Data protection regulations (GDPR)

In big data projects, you need to ensure that the data protection act is adhered to for all sources of data.

Conclusion

Data is an asset, which companies can employ to help them become or remain successful. These days, we’ve moved on from how to store this mass of data to what the data can do for us. Companies are increasingly using data to secure future competitive advantages. However, the rapidly growing stream of data and how to manage it is one of the key challenges for new digital solutions. For many companies, the possibilities of big data have been the wakeup call they need to analyse their position; as a whole and in finer detail. In the future, we will see big data increasingly guiding and influencing corporate decision-making.

[1] Marktmonitor New Players Network

[2] https://www.ercim.eu/publication/ws-proceedings/12th-EDRG/EDRG12_JeDiRe.pdf

[3] BARC: Big Data Use Cases (sas.com)

[4] Based on https://www.scnsoft.de/blog/was-ist-big-data

Thank you for your message

We appreciate your interest in SEEBURGER

Get in contact with us:

Please enter details about your project in the message section so we can direct your inquiry to the right consultant.

Written by: Rolf Holicki

Rolf Holicki, Director BU E-Invoicing, SAP&Web Process, is responsible for the SAP/WEB applications and digitization expert. He has more than 25 years of experience in e-invoicing, SAP, Workflow and business process automation. Rolf Holicki has been with SEEBURGER since 2005.